Google and Claude's moves signal MCP adoption is accelerating into production. Builders need production-ready patterns to implement it effectively.

Builders can now build MCP servers that work across multiple AI platforms simultaneously, creating more distribution leverage from single implementations.

Signal analysis

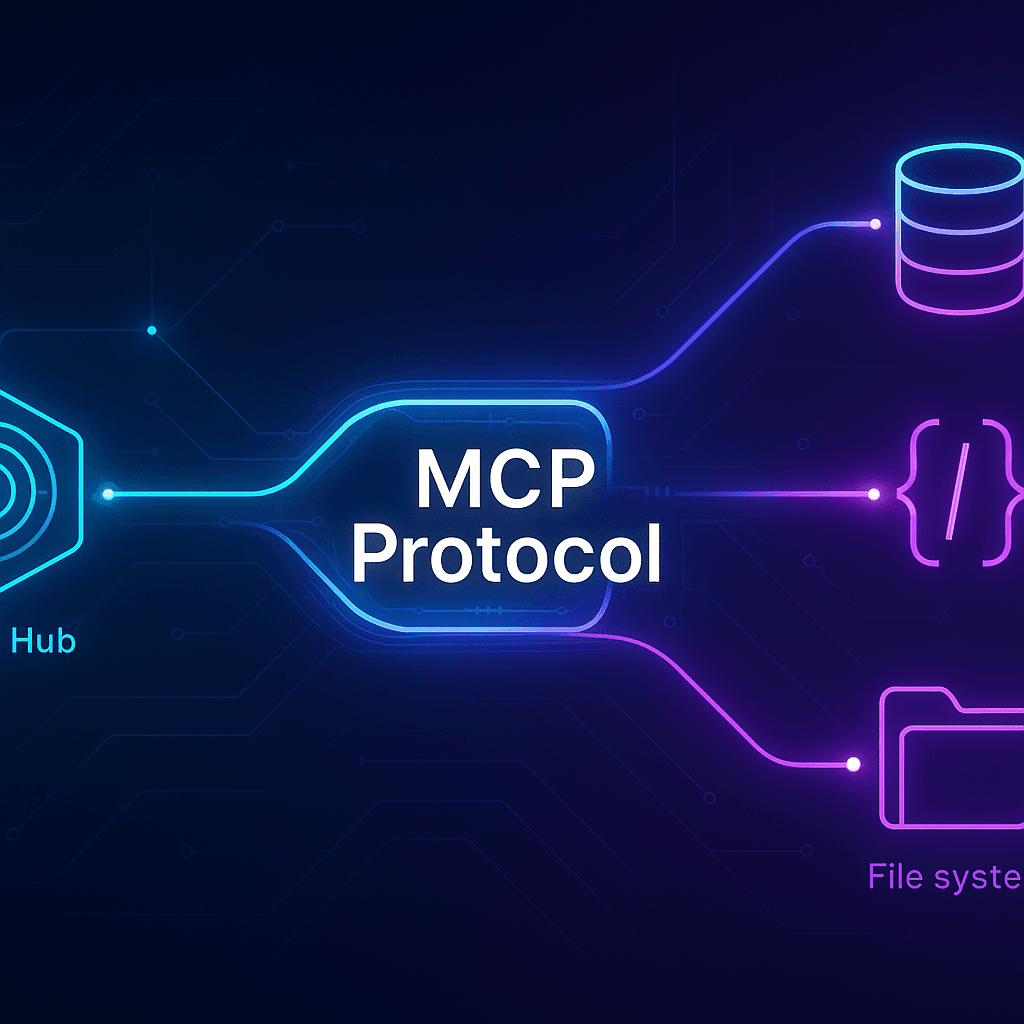

Here at Lead AI Dot Dev, we tracked a critical inflection point: Google shipping Colab MCP Server and Claude gaining native database, API, and file system access capabilities signals that Model Context Protocol is transitioning from experimental to foundational infrastructure. This isn't theoretical anymore. When major platforms standardize on a protocol, builders who haven't implemented it start losing capability parity.

The dev.to post from young_gao demonstrates this shift by providing production-ready patterns for TypeScript MCP servers. The timing matters. Six months ago, MCP was a Anthropic experiment. Today it's the connective tissue between AI systems and external infrastructure. This acceleration means the window for builders to establish MCP competency is closing rapidly.

What makes this significant: MCP abstracts away the friction of connecting agents to tools. Instead of each AI provider building custom integrations with databases, APIs, and file systems, MCP creates a standard contract. That contract is becoming the industry baseline.

The dev.to guide focuses on TypeScript specifically because it's the language most web builders use. That's intentional friction reduction. MCP servers expose a clean interface: input, processing, output. The patterns shown handle the critical production concerns - error handling, resource management, context limits, and security boundaries.

Builders implementing MCP servers should focus on three concrete requirements: First, understand what capability you're exposing. Don't build MCP servers for everything - target specific integrations that agents genuinely need repeated access to. Second, handle async operations correctly and build explicit error contracts so agents understand failure modes. Third, test against actual Claude instances and other MCP clients to ensure your implementation follows the spec.

The production readiness of these patterns means you can move past proof-of-concept. If you've been sitting on an MCP implementation, the maturity of tooling and documentation now justifies shipping it.

When Google moves in the same direction as Anthropic on a protocol standard, the industry follows. We're watching the same pattern that solidified REST as the HTTP standard or JSON as the data format default. MCP is becoming that layer for agent-to-tool communication. This consolidation has direct implications: platforms that adopt MCP gain access to a growing ecosystem of pre-built servers. Builders who ship MCP implementations get distribution across multiple AI platforms simultaneously.

The acceleration is creating a winner-take-most dynamic in specific MCP server categories. If you're building database connectors, API adapters, or file system bridges, the first well-executed production implementation in your category will set the reference standard. Later implementations face the burden of proving they're better. This window is open now but closing fast.

Thank you for listening, Lead AI Dot Dev

Best use cases

Open the scenarios below to see where this shift creates the clearest practical advantage.

One concise email with the releases, workflow changes, and AI dev moves worth paying attention to.

More updates in the same lane.

Cognition AI has launched Devin 2.2, bringing significant AI capabilities and user interface enhancements to streamline developer workflows.

GitHub Copilot can now resolve merge conflicts on pull requests, streamlining the development process.

GitHub Copilot will begin using user interactions to improve its AI model, raising data privacy concerns.